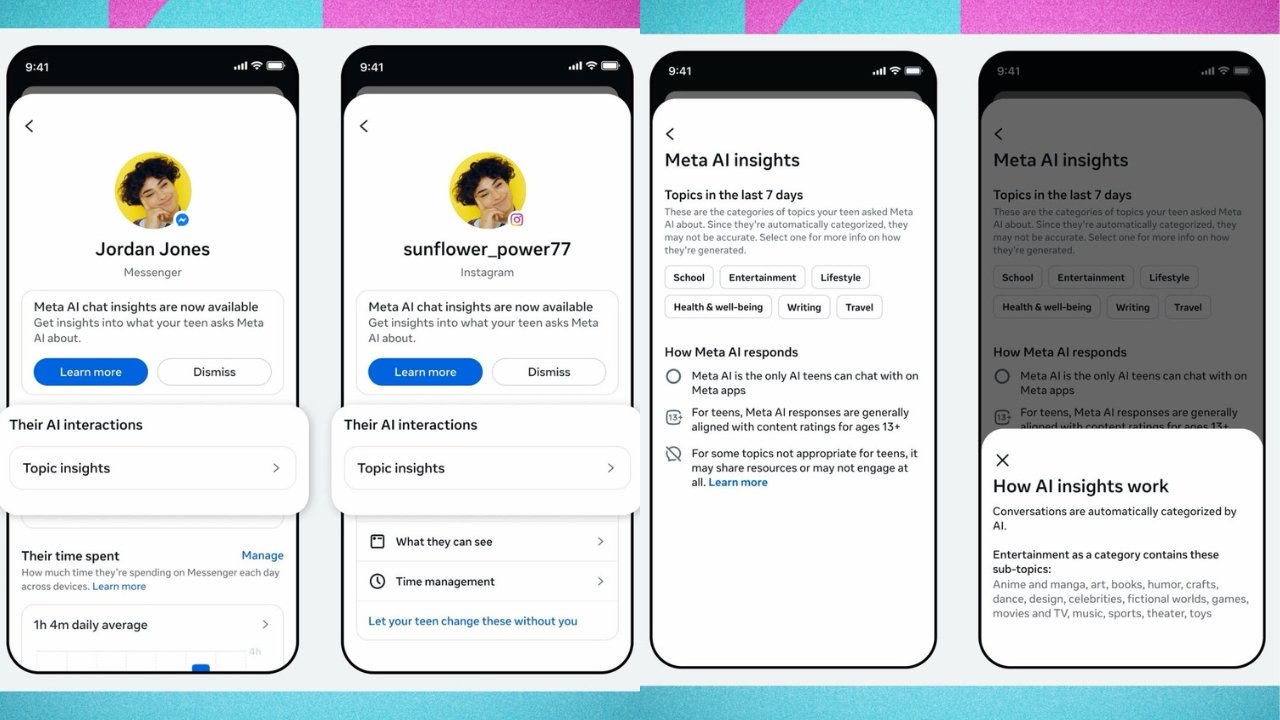

Meta has announced an update to its teen supervision tools , which will now enable parents to view what their kids are asking its artificial intelligence chatbot. The update was included as a new element in Meta’s Family Center tools, allowing parents to access a list of their kids’ Meta AI queries.

However, there is a significant limitation: parents will see broad category overviews of the types of questions being asked, not the specific content of the queries.

How It Works – Categories, Not Specifics

Meta said that topics are automatically categorized by AI , so the list provides a fairly broad overview of queries rather than specific details. The idea is that this will give parents some level of transparency, in case their kids are researching areas of concern.

| Category | Sub-Categories (Examples) |

|---|---|

| School | Homework, subjects, assignments |

| Entertainment | Movies, music, games |

| Lifestyle | Fashion, food, holidays |

| Travel | Destinations, planning |

| Writing | Essays, creative writing |

| Health and Wellbeing | Fitness, physical health, mental health |

“Parents can tap on a topic to see the different categories that fall within each one. For example, categories within Lifestyle include fashion, food, and holidays, and categories within Health and Wellbeing include fitness, physical health, and mental health.”

The Limitation – Broad, Not Specific

The broad nature of these categories presents a challenge.

| Challenge | Implication |

|---|---|

| Extremely broad topics | “Health” could mean anything from a cold to a crisis |

| No specific queries | Parents don’t see the actual questions |

| Potential false alarms | May worry parents unnecessarily |

| Missing context | A query about “mental health” could be for a school project |

“If a teen is looking up health-related subjects, that could be a major concern, a minor query, or even an unrelated question, depending on how the tool categorizes each. As such, this oversight could end up worrying parents unnecessarily, even if it has the potential to provide more insight into their child’s activity.”

Special Alerts for Suicide and Self-Harm

Meta acknowledged that the category system may not be sufficient for the most sensitive issues. For suicide and self-harm , the company is going further.

“While the insights are designed to give parents greater visibility into the general topics their teens are asking Meta AI about, for sensitive issues related to suicide and self-harm, we’re going further. We recently announced that we’re developing new alerts to let parents know if their teen tries to engage in conversations related to suicide or self-harm with Meta AI — and we’ll have more to share on those alerts soon.”

| Alert Type | Trigger | Action |

|---|---|---|

| Standard categories | General AI queries | Parent views broad topic list |

| Sensitive alerts | Suicide/self-harm queries | Parent notified directly |

Conversation Starters for Parents

Meta has worked with the Cyberbullying Research Center to develop conversation starters to help parents ask their teens about their search activity.

“These conversation starters are available on the Family Center website, and parents can also access them through a link in the new Insights tab.”

This acknowledges that simply seeing a category – even a concerning one – is not enough. Parents need guidance on how to approach difficult conversations with their teens.

Availability – Where and When

| Region | Status |

|---|---|

| United States | Available now |

| United Kingdom | Available now |

| Australia | Available now |

| Canada | Available now |

| Brazil | Available now |

| Other regions | Coming soon |

The new AI chatbot insights are now available for parents supervising Teen Accounts in these initial markets.

The Bigger Picture – Meta’s Teen Safety Push

This update is part of Meta’s broader effort to address concerns about teen safety on its platforms.

| Previous Measures | New Measure |

|---|---|

| Teen Accounts (restricted settings for under-18s) | AI query monitoring |

| Parental supervision tools (screen time, privacy) | Category-level insights |

| Content restrictions (sensitive content filtering) | Suicide/self-harm alerts |

Meta has faced significant scrutiny from regulators, parents, and advocacy groups over the impact of its platforms on teen mental health. These tools are part of the company’s response.

Privacy Concerns – Balancing Safety and Autonomy

The update raises questions about teen privacy and the balance between parental oversight and adolescent autonomy.

| Pro-Parental Oversight | Pro-Teen Privacy |

|---|---|

| Parents have right to know about risks | Teens need space to explore and learn |

| Mental health crises require intervention | Constant monitoring erodes trust |

| AI chatbots can give dangerous advice | Categories are too broad to be meaningful |

Meta’s approach – categories rather than specific queries – attempts to strike a balance. But it may satisfy neither side fully.

Why This Matters – Teens and AI Chatbots

Teens are increasingly using AI chatbots for:

| Use Case | Potential Risk |

|---|---|

| Homework help | Generally low risk |

| Emotional support | AI may give inappropriate advice |

| Health questions | Misinformation or harmful suggestions |

| Social advice | May not understand nuanced situations |

“Maybe kids shouldn’t be relying on AI for these types of questions, but in theory this topic list can give parents a means to raise issues with teens and to ensure better information flow. Though it could also end up being an awkward prompt to start relevant conversations.”

Future Improvements

Meta said it will continue to improve and refine the system to make it a more valuable and useful resource over time, based on:

- Feedback from users

- Input from expert advisors

The company has not provided a specific timeline for the suicide/self-harm alerts mentioned in the announcement.

A Step Forward – With Limitations

Meta’s decision to let parents see their teens’ AI queries – at least at the category level – is a significant step in teen safety. For the first time, parents can get some visibility into what their kids are asking chatbots about.

But the limitations are real. Categories are broad. Specific queries remain private. And parents may be left wondering whether a “Health and Wellbeing” category means their teen is researching fitness tips or experiencing a mental health crisis.

The forthcoming suicide and self-harm alerts will address the most critical scenarios. And the conversation starters developed with the Cyberbullying Research Center may help parents navigate difficult discussions.

As Meta refines the system, the key question remains: Will this tool actually help parents keep teens safe – or will it create more anxiety without providing actionable information?

For now, the tool is available in the U.S., U.K., Australia, Canada, and Brazil, with more regions to follow. Parents with teens on Meta platforms can access the new insights through the Family Center.

The conversation about teen safety and AI is just beginning.